This is not a marketing piece.

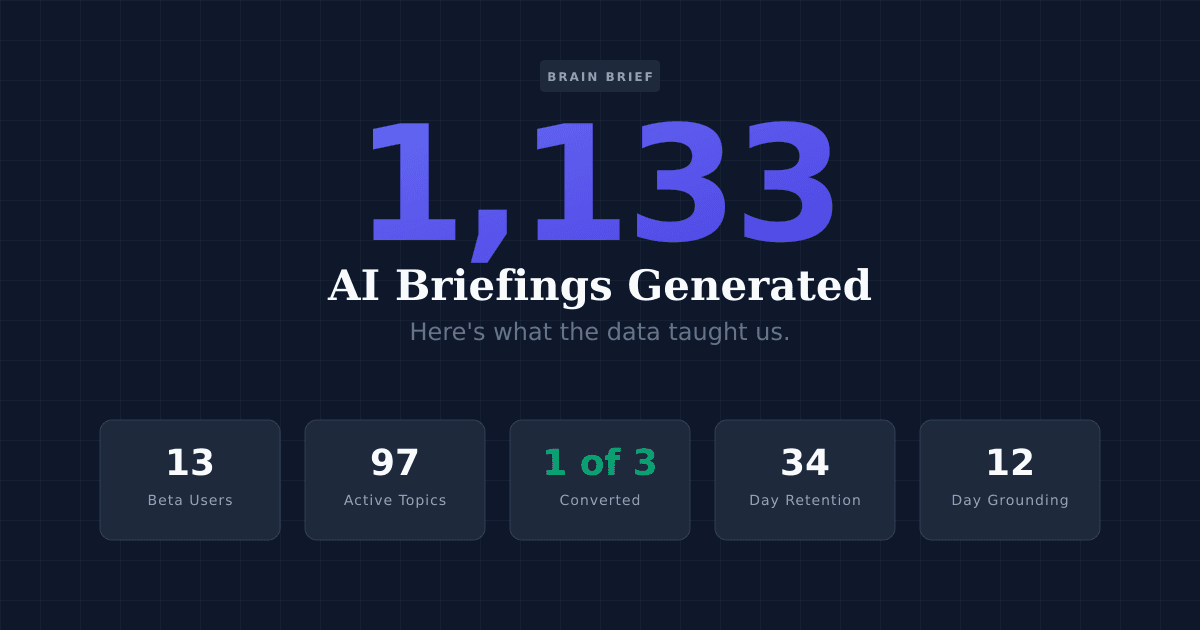

We're a small team, we have 13 users, and we've been running Brain Brief for under two months. The dataset we're working with is genuinely small — roughly 1,130 AI-generated briefings across 97 topics and 7 content categories (about 90 for real users and ~1,040 daily refreshes on our public topic pipeline). By the standards of most published research, this is a pilot study, not a conclusion.

But we think the data is worth sharing, for three reasons. First, no one else has published anything like it, because no one else has built quite this way. Second, some of what we found surprised us, and the surprises seem generalizable. Third, transparency is the only credibility available to a company at our stage. We'd rather show you the data and let you evaluate it than tell you what to believe about it.

Here's what 1,000 briefings taught us about how people stay informed.

1. The Setup

The information problem we're trying to solve is well-documented. The average knowledge worker spends 28% of their workday reading and answering emails, and a separate study by McKinsey estimates knowledge workers spend 1.8 hours per day searching for and gathering information. Simultaneously, research from Gallup suggests that most Americans feel they're not staying well-informed on the topics they care about, despite consuming more content than any previous generation.

The standard solutions — newsletters, RSS readers, news apps, social media feeds — share a common limitation: they're built for audiences, not individuals. They decide what matters across a population of subscribers. They can't know what specific corner of a topic is relevant to you specifically.

Brain Brief is our attempt to solve the selection problem rather than the volume problem. You tell it what you want to understand (up to 10 topics), and it generates a personalized briefing every morning covering only those things. No editorial curation, no algorithmic feed, no one-size-fits-all coverage.

The dataset: Between February and April 2026, Brain Brief generated approximately 1,130 briefings — about 90 for our 13 closed-beta users, and ~1,040 daily refreshes for our public topic pipeline — covering 97 distinct topics across 7 content categories. The retention and conversion numbers we discuss below are drawn from the 90-briefing user cohort, not the pipeline aggregate. We're publishing this analysis because the patterns are interesting, the sample is honest, and the questions it raises matter beyond our specific product.

2. The Anatomy of an AI Briefing

Every briefing starts with the same question: what happened in the last 24 hours across each of this user's topics that's worth knowing about?

Answering that question in a useful way requires more than summarization. It requires grounding — the briefing content has to come from actual, verifiable sources rather than the model's training data, which may be weeks or months out of date. We use Gemini with Google Search grounding to ensure every claim in every briefing is tied to a real, retrievable source.

The technical architecture: Rather than generating one unified briefing for all topics simultaneously, we run a parallel pipeline — one generation job per topic, per user, per day. A user following five topics gets five independent generation runs whose outputs are assembled into a single document. This matters more than it might seem: a single large prompt asking the model to cover five topics at once tends to produce homogenized output, with shorter coverage per topic and more hallucination risk as the context window fills. The per-topic parallel approach yields more specific, more grounded, and more appropriately sized coverage for each subject.

Output format: Each topic section runs approximately 150-200 words, structured around three key developments with brief context for each. The full briefing across multiple topics lands in the 600-800 word range — long enough to be substantive, short enough to read in the time it takes to finish a cup of coffee.

The grounding record: As of this writing, we've held 9 consecutive days of 100% grounding across our full pipeline, and 12+ consecutive days of 100% grounding on the user-facing briefings specifically. Every claim is tied to a verifiable source. This followed a period of inconsistency (more on that in the "what broke" section below). The distinction between 95% grounding reliability and 100% grounding reliability sounds small. It is not. "Usually trustworthy" and "trustworthy" are categorically different standards when someone is relying on a briefing to stay informed. The 5% you can't trust contaminates the 95% you can.

3. What 97 Topics Reveal About Information Diets

The conventional wisdom about personalized news assumes that personalization means preference within a category — your preferred football team, your local city, your political perspective within news. The 97 topics in our dataset suggest the reality is more interesting: the things people actually want to understand span categories in ways that mainstream publications aren't organized to serve.

The 7 categories our 97 topics fall into: Technology and AI, Business and Finance, Politics and Policy, Health and Science, Sports and Culture, Industry and Niche Verticals, and a catch-all Other category covering everything from aviation to specific geographic regions to niche academic fields. That last category — the long tail — accounts for a meaningful fraction of the topics and is the most revealing.

A few examples of actual topic selections from our 13 users: commercial aviation regulations, UFC and combat sports, vertical farming technology, central bank digital currencies, Formula 1 technical regulations, AI policy in the European Union, and the economics of independent game development. No single publication covers this combination. No newsletter serves this reader. The granularity at which people actually want to be informed — not "technology" but "EU AI policy," not "sports" but "UFC" — is finer than any general-interest publication can accommodate.

This is the structural limitation that makes true personalization genuinely different from preference-surfacing within a category. The user following aviation regulations and UFC doesn't want a news source that covers those categories more — they want a source that covers exactly those things and nothing else. That's not an editorial decision; it's a system architecture decision.

What this means for traditional newsletters: The observation here isn't that newsletters are bad. They're excellent at what they're designed for: serving a shared interest across a defined audience. The observation is that the user who needs coverage of aviation, UFC, vertical farming, and CBDC policy simultaneously has no good existing option. That gap in the market is, in part, what we're building toward.

4. The Retention Signal That Surprised Us

Newsletter churn is one of the more brutal metrics in media. Industry averages suggest 30-40% monthly churn for paid newsletters. Even well-run consumer email products see monthly churn rates that require constant top-of-funnel activity to compensate. The core problem is that a newsletter that covers your interests broadly is replaceable — there are often several alternatives, and switching costs are low.

What we didn't anticipate before launching was how much genuine personalization would change this dynamic.

Our most engaged user — we'll call them User R — has received 34 consecutive daily briefings and counting as of this writing. Not weekly. Not "most days." Every day. (There was a single gap on March 17 caused by a scheduler-timeout bug we've since fixed — we mention it because it's the kind of operational failure worth being honest about.) That streak represents nearly 5 weeks of continuous daily active use for a product in closed soft launch with a small user base and no significant marketing spend.

We're careful not to over-index on a single user, and the sample for any conversion claim here is small enough to warrant a footnote rather than a headline. Of the 3 external users (excluding team and test accounts) who completed the 7-day trial with continuous daily engagement, 1 has converted to paid. That's a 33% rate, but on a sample this small it's a directional signal, not a conclusion. For context, B2B SaaS averages 2-5% trial-to-paid conversion and consumer SaaS averages even lower — so even discounting heavily for sample size, the early signal is in a different regime than standard benchmarks predict.

The hypothesis: Personalization changes the switching calculus. A user who has selected their 5-10 specific topics, received calibrated coverage on them for several weeks, and built a morning reading habit around the briefing has created something difficult to replicate elsewhere. The briefing has become attuned to their interests not because we've explicitly modeled them (we haven't yet — there's no click-tracking or preference learning in the current system) but because their initial topic selections were specific enough to generate consistently relevant content.

This suggests that the value accumulates over time — the longer you use a personalized briefing, the harder it becomes to find an alternative that covers your exact combination of interests. That's a different retention mechanism than most news products, which compete on content quality and breadth. We're competing, essentially, on fit.

The open question: We don't yet know whether User R's streak and the 1-of-3 conversion signal are reproducible at scale, or whether they're artifacts of an early-adopter user base that's more self-selecting than a general audience would be. We think the underlying mechanism (specific topic selection = higher perceived switching cost) holds at scale, but this needs more data to test.

5. The Economics of AI-Generated Content

Brain Brief costs $6 per month or $50 per year. Those numbers were chosen through a combination of market research on comparable products and our own cost modeling, and the margin dynamics are worth being transparent about.

The core economic insight is this: once you've built a per-topic parallel generation pipeline, the marginal cost of adding a topic is nearly zero. The infrastructure that generates a briefing on one topic can generate a briefing on 10 topics at essentially the same fixed cost — the parallelization handles the scaling. This is structurally different from human-written content, where each additional topic requires proportionally more editorial labor.

This changes what's viable in niche content. A human-written newsletter covering aviation regulations and UFC and vertical farming simultaneously is economically impossible — the editorial resources required to produce quality coverage across three such different domains would require a much larger revenue base than any single-niche audience could provide. An AI generation pipeline covering all three costs nearly the same as covering one.

The implication for media economics is significant. The traditional trade-off between specificity and scale — niche publications serve small audiences with high content costs per subscriber; general publications serve large audiences with lower content costs per subscriber — breaks down when the marginal cost of topic coverage approaches zero. You can serve a 500-person audience that collectively wants 97 different things, and the economics work if the per-user subscription value is sufficient.

At $6/month with an early 1-of-3 external-trial conversion rate and near-zero marginal content costs, the early numbers suggest a viable unit economics profile — with the enormous caveat that 1-of-3 is not a conversion rate in any statistically meaningful sense yet. We're far too early to claim anything definitive about long-term margins, and customer acquisition cost remains the largest unknown. But the cost structure is different enough from legacy media that it's worth naming explicitly.

6. What Broke (and What We Fixed)

Transparency requires including the failures.

The most significant operational problem we encountered in our first month was a grounding-reliability regression in our generation pipeline, and the cause was more interesting than we'd assumed going in. Two things converged. First, Gemini's Google Search grounding is non-deterministic in a subtle way: the same query can occasionally return an empty response — no text, no grounding chunks — without raising an error. It's a silent-failure mode, not an exception you can catch on the obvious path. Second, during a refactor of our per-topic generation pipeline, a fallback handler for exactly this empty-response case was inadvertently removed. A related code path had the same gap. The result was that when Gemini returned empty, we were emitting a briefing skeleton rather than either retrying, rendering a degraded-but-honest fallback, or skipping the send.

A contributing factor compounded the problem: our batch SEO-content generation job was running a few hours before our user-briefing job each morning, and appears to have been exhausting grounding capacity (or hitting some unpublished rate ceiling) before the user job ran. The user briefings were getting the short end of a shared quota they didn't know they were sharing.

We caught this through our own quality monitoring before any user flagged it — but it was a genuine failure mode, and it's the kind of failure that matters more in AI-generated content than in human-written content. When a human journalist gets something wrong, the failure is visible and attributable. When an AI-generated briefing contains an unverified claim, the failure mode is invisible to the reader — the text reads the same whether or not it's grounded.

We fixed three things: we restored the fallback handler on the original code path, added a second fallback for the related path, and separated the two cron windows by several hours so the batch job can't starve the user-facing one. We also started persisting a per-briefing grounded flag so we can audit grounding rates over time without re-running inference. Since these fixes landed, we've held 9 consecutive days of 100% grounding across the full pipeline and 12+ days on user-facing briefings.

The lesson we'd offer to other teams building on similar infrastructure: treat the grounding rate metric as a binary threshold, not a continuous improvement metric — and assume your LLM vendor's grounding endpoint has silent-failure modes you haven't seen yet. 95% grounded is not "almost there." It's a qualitatively different product than 100% grounded, because the 5% failure rate is invisible to users and therefore can't be corrected by normal feedback mechanisms. Build for the threshold, not the trend, and log aggressively enough to detect a silent regression before your users do.

We also implemented a story deduplication layer after noticing that breaking stories were sometimes appearing across multiple topic briefings for the same user — a user tracking both "AI" and "Large Language Models" might receive the same GPT-5 announcement in both topic sections. The deduplication logic now checks for content overlap across a user's topics before assembly and consolidates repeated stories into the most relevant topic section.

These fixes delayed our planned launch date. In retrospect, the delay was correct.

7. What This Means for the Future

We want to be careful here. Thirteen users and ~1,130 briefings is a dataset, not a proof. The following claims are hypotheses grounded in early data, not conclusions.

First: Genuine personalization — not preference surfacing within a category, but topic selection at the level of specific interest — produces qualitatively different engagement than editorial curation. User R's 34-day consecutive streak and a 1-of-3 early conversion signal among engaged external trialers suggest this, but the sample is small.

Second: The long tail of information interests is substantially underserved. Our 97 topics span interests that no publication currently serves in combination. As AI generation costs continue to fall, the economic case for serving niche combinations of topics will strengthen.

Third: The grounding problem is more important than the generation problem. The limiting factor in AI-generated news isn't the quality of the language model — current models write well. It's the verifiability of the content. Any AI news product that can't guarantee 100% grounding reliability at scale is producing content that users can't fully trust, and trust is the only durable competitive advantage in information.

Fourth: The metric that matters most for AI-generated briefing products isn't open rate or click rate. It's whether the briefing was useful for the day's decisions and conversations. We don't yet have a good way to measure this directly. Building that feedback loop — which requires understanding what users did with their briefings, not just whether they opened them — is our most important unsolved product problem.

The open question we keep coming back to: The most valuable version of a personalized daily briefing isn't one that covers your topics. It's one that knows which of your topics had a development today that actually matters — and knows how to weight that against days when nothing significant happened in any of them. A briefing that says "here's nothing interesting across your 10 topics today, you can skip this one" would be a radically honest product. We're not there yet.

Closing

We built Brain Brief because we couldn't find a product that would tell us, specifically, what we needed to know each day. The ~1,130 briefings we've generated since launch have confirmed that this problem is real, that personalization at the topic level changes retention behavior, that the economics of AI-generated niche content are genuinely different from legacy media, and that grounding reliability is the make-or-break variable for trust.

We've also confirmed that 13 users and two months of data is the beginning of an answer, not the answer itself.

If you're building in adjacent spaces — AI content generation, personalized media, newsletter infrastructure — we're happy to discuss what we've found. If you're a researcher studying AI-generated content or personalization dynamics, this dataset is small but real, and we're open to sharing more granular data with the right partners.

Brain Brief is available at brainbrief.app. Seven-day free trial.

Methodology note: All figures in this article are drawn from Brain Brief's internal analytics as of April 20, 2026. The dataset covers approximately 1,133 briefings generated between February 14 and April 20, 2026: about 90 briefings delivered to our 13 closed-beta users, plus ~1,040 daily briefings generated for our 97 public topic pages. Retention and conversion metrics are computed only on the user-cohort subset. The 34-day consecutive-delivery figure reflects the single longest continuous daily streak for one user (a one-day gap on March 17 from a scheduler-timeout bug is excluded from the consecutive count; that user has received 39 briefings across 38 days since signup). The "1 of 3 external users" conversion figure counts users, excluding team and test accounts, who completed the 7-day trial with continuous daily engagement. The 9-day / 12-day grounding streak reflects 100% grounding across (a) the full generation pipeline including the public topic pages, and (b) user-facing briefings specifically. Small sample sizes should be interpreted accordingly.